What the heck is a Service Mesh, anyway?

Your microservices architecture can benefit immensely with a Service Mesh. Here's how.

- 🗓️ Date:

- ⏱️ Time to read:

Table of Contents

Software applications can be thought as an ever-evolving organism. As the number of active users for your apps increase, at some point you're going to eventually consider the problem of scale.

One of the solutions that gradually evolved over the years to manage the challenges of scale were microservices. The design principle of a micro-service based architecture is to treat the application as a collection of individual, loosely-coupled services. This offers several advantages over monoliths-faster development, better deployment, fault tolerance, security and more to name a few.

But designing a microservice-based architecture comes with its own set up challenges. For instance:

- How do you manage and visualize traffic flows between microservices?

- How do you keep the data flow between microservices secure?

- Observability - how do measure the internal states of a particular service?

- Monitoring and tracing of network requests.

For this, you need something called Service Mesh. But first, let’s first look at how a typical cluster is implemented using Kubernetes.

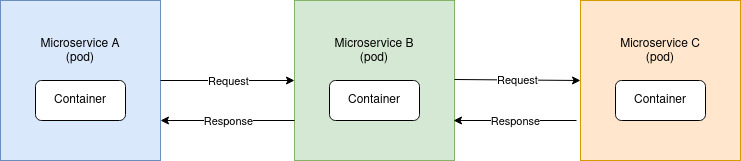

A conceptual container orchestration setup

Here is a diagram of a typical cluster in Kubernetes:

Here, microservice A could for instance, use the services of microservice B, which in-turn could rely on another microservice C. A typical architecture built using Kubernetes would involve the Pod A making a network request to Pod B, and in-turn would make a request to Pod C.

A container orchestration tool like Kubernetes helps you manage and handle the pods, but it has little control over the actual data or network flow between pods. What’s going on at the network connections when each individual pod makes a request to another pod?

In a cluster with dozens or even hundreds of pods, you will need visibility on the interconnections between the pods themselves. Here is where a service mesh comes into play.

What is a Service Mesh?

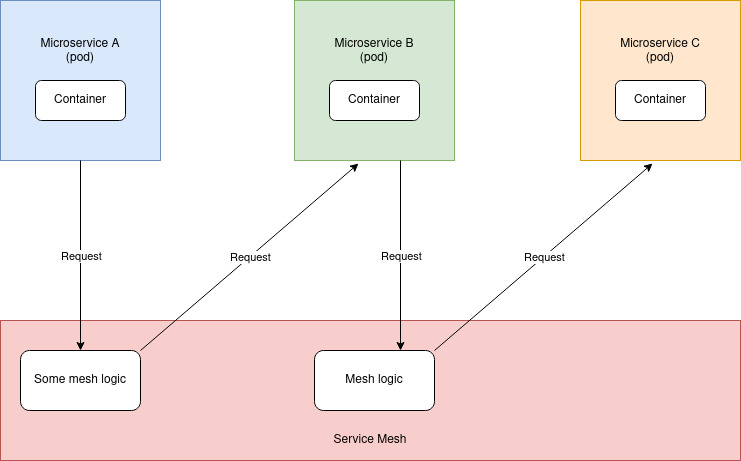

A Service Mesh is an extra layer of abstraction that you add to your Cluster of services, in-order to efficiently manage and monitor them.

For instance, if you’re using Kubernetes, you can think of a service mesh as this layer of software that resides underneath your kubernetes pods. This software takes over control over every network request made between pods in the entire cluster.

Wait, but why though?

The service mesh software intercepts each incoming network request, and re-routes it to the desired container. In-doing so, it can implement some function or some mesh logic, that uses the network request and do some pretty interesting stuff. For instance:

- Measure the time taken for the network request to complete.

- Collate network errors that occur in any part of the cluster.

- Implement security - for instance, all network calls must be encrypted.

- Re-route requests based on a given criteria or when certain conditions are met.

- Visualize and manage network traffic

Without a service mesh, it would be pretty difficult to implement the above.

Indeed, the most useful features of a service mesh involves telemetry. Telemetry involves monitoring the health and security of microservices, measuring their performance, and collecting useful statistics such as the memory overhead, CPU utilization and more.

Another feature of a service mesh is tracing. This would allow you to visualize the network flow and trace the chain of requests within the context of a particular feature or service.The service mesh could gather such useful information that would enable for faster debugging and bug resolution, or even run a time series analysis to predict future outages of a particular service.

Istio

Istio is a Kubernetes native solution, used by several large scale corporations like Google, IBM and Microsoft. It has support for K8s and also virtual machines.

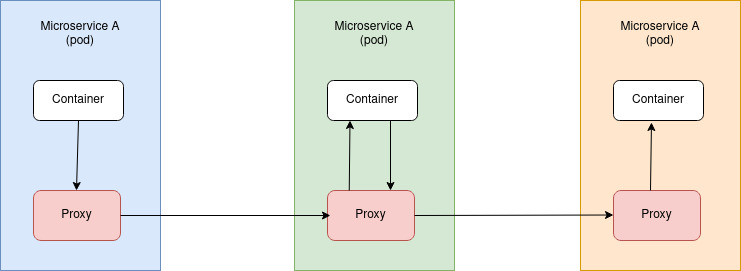

There are many different ways by which a service mesh could be implemented. Here’s how Istio implements it:

For each of the pods in the cluster, Istio injects its own container, also known as a proxy. Each individual proxy takes over and handles all incoming and outgoing network requests for that pod. This proxy contains the mesh logic to further process the network request for tracing, telemetry, and re-direct it to the target container proxy.

Istio is designed to be loosely coupled. The individual containers have no knowledge about the proxy injected by Istio, and neither do they need to contain any code for traffic management, tracing or other features provided by Istio. The individual pods continue to run and make network calls as though they were directly communicating to other pods.

This design of service mesh implemented by Istio is also known as the Sidecar pattern.

Other implementations

Similar to Istio, there are other popular implementations as well:

- Linkerd: Claims to be the lightest and simpler than any other service mesh implementation. It’s K8s only. Has its own proxy written in Rust.

- Consul: A full-feature service management system. Runs as a Daemon on every K8s node and works with Envoy.

Here’s an article that compares these implementations.

Istio under the hood

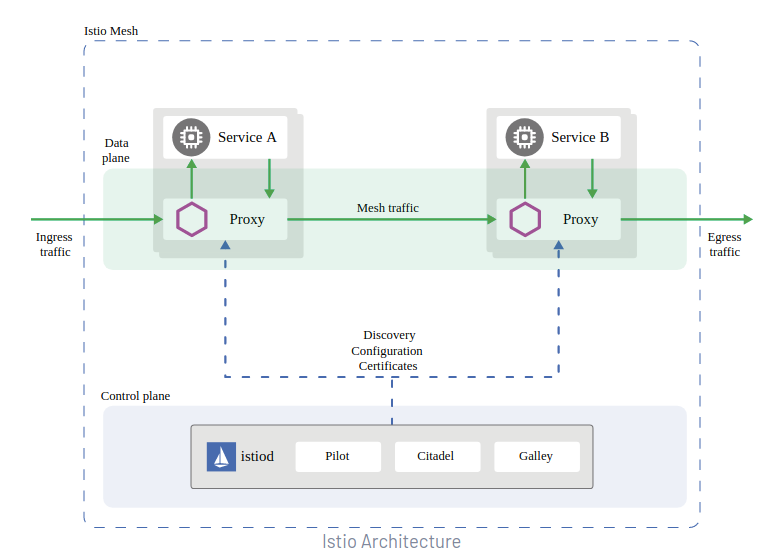

Istio actually assembles together several third party components in-order to construct a service-mesh for your cluster.

Data plane

Istio uses Envoy, an open-source cloud-native service proxy under the hood for the Proxy containers within your pods. These proxies constitute the data plane layer of Istio.

Control plane

The control plane consists of majorly 3 components:

Galley: If you’re using Kubernetes, Galley reads your Kubernetes YAML file and transforms it into a format that Istio understands. It can also work with other orchestration frameworks such as Mesos and Consul.

Pilot: This component takes the configurations and then converts them into a format that Envoy understands, in-order to deploy the proxies.

Citadel: Responsible for certificate management and enabling TLS / SSL across your entire cluster.

Since Istio version 1.5, the above components have been combined into a single binary called istiod.

At Egen, we’ve put together a video in-order to understand fully well how Istio works with a bunch use-cases showing what you can do with it. We also show you a real-world example of a service not working as intended, and how Istio can help you narrow down the problem and apply an appropriate fix.

Thanks for reading

At Egen, we host several such meetups discussing scalability, distributed systems, application engineering and more. Follow us on collective.ac to get notified for future events.